目錄表

ZFS 指令與操作整理

- 以下 zfs 的操作環境是在 PVE(PROXMOX Virtual Environment) 主機環境執行

ZFS 基本語法

新硬碟建立為 ZFS 方式

- 建立一個 ZFS 的 Partation Exp. /dev/sdb

fdisk /dev/sdb- g : 建立為使用 GPT disklabel 硬碟

- n : 建立一個新的 Partation

- t : 48 - Solaris /usr & Apple ZFS

- w : 寫入

- 透過 zfs 工具建立 pool Exp. /dev/sdb2 → ssd-zpool

zpool create -f -o ashift=12 ssd-zpool /dev/sdb2 zfs set compression=lz4 atime=off ssd-zpool zpool list

root@TP-PVE-249:~# zpool list NAME SIZE ALLOC FREE EXPANDSZ FRAG CAP DEDUP HEALTH ALTROOT rpool 928G 169G 759G - 8% 18% 1.00x ONLINE - ssd-zpool 448G 444K 448G - 0% 0% 1.00x ONLINE -

- 再透過 PVE web 介面 Datacenter → Storage → Add → ZFS

- 輸入 ID Exp. ssd-zfs

- 選擇 ZFS Pool : ssd-zpool

- 這樣就可以加入 ZFS 的磁碟

限制 ZFS 使用多少 RAM 當 cache 方式

- 預設 ZFS 會使用主機的 50% RAM 當 Cache, 如要更改就需要設定 zfs_arc_max 的值, 為了 ZFS 的效能, zfs_arc_max 的值不應該小於 2 GiB Base + 1 GiB/TiB ZFS Storage, 也就是說如果有 1T 的 ZFS pool , 需要的 RAM 至少 2+1 = 3G

- Exp. 限制最多使用 3 GB 的 RAM 當 ZFS Cache

- 編輯 /etc/modprobe.d/zfs.conf 設定檔, 讓 zfs_arc_max 設定值永久生效

root@aac:~# echo "$[3*1024*1024*1024]" 3221225472

vi /etc/modprobe.d/zfs.conf: options zfs zfs_arc_max=3221225472

- 如果 root 不是 ZFS 可以設定立即生效

echo "$[3 * 1024*1024*1024]" >/sys/module/zfs/parameters/zfs_arc_max - 如果 root 是 ZFS 需要執行以下命令, 然後重新開機生效

update-initramfs -u -k all reboot

將一顆 ZFS 資料碟加回主機內

因系統碟毀損重新安裝, 將原本放在 zfs 資料碟加回重新安裝的主機內

- 這棵資料碟 /dev/nvme0n1 透過 fdisk -l /dev/nvme0n1 可看到以下資訊

root@nuc:/etc/postfix# fdisk -l /dev/nvme0n1 Disk /dev/nvme0n1: 953.9 GiB, 1024209543168 bytes, 2000409264 sectors Disk model: PLEXTOR PX-1TM9PeGN Units: sectors of 1 * 512 = 512 bytes Sector size (logical/physical): 512 bytes / 512 bytes I/O size (minimum/optimal): 512 bytes / 512 bytes Disklabel type: gpt Disk identifier: 31E1D4CF-870D-E741-B28E-2305FDF86533 Device Start End Sectors Size Type /dev/nvme0n1p1 2048 2000392191 2000390144 953.9G Solaris /usr & Apple ZFS /dev/nvme0n1p9 2000392192 2000408575 16384 8M Solaris reserved 1

- 透過 zdb -l /dev/nvme0n1p1 可看到以下資訊

root@nuc:/etc/postfix# zdb -l /dev/nvme0n1p1 ------------------------------------ LABEL 0 ------------------------------------ version: 5000 name: 'local-zfs' state: 0 txg: 5514533 pool_guid: 1902468729180364296 errata: 0 hostid: 585158084 hostname: 'nuc' top_guid: 1144094455533164821 guid: 1144094455533164821 vdev_children: 1 vdev_tree: type: 'disk' id: 0 guid: 1144094455533164821 path: '/dev/nvme0n1p1' devid: 'nvme-PLEXTOR_PX-1TM9PeGN_P02927100475-part1' phys_path: 'pci-0000:6d:00.0-nvme-1' whole_disk: 1 metaslab_array: 65 metaslab_shift: 33 ashift: 12 asize: 1024195035136 is_log: 0 DTL: 25995 create_txg: 4 features_for_read: com.delphix:hole_birth com.delphix:embedded_data labels = 0 1 2 3

- 執行 zpool import -d /dev/nvme0n1p1 檢查是否可以依照之前狀態建立出 local-zfs

root@nuc:/etc/postfix# zpool import -d /dev/nvme0n1p1 pool: local-zfs id: 1902468729180364296 state: ONLINE status: The pool was last accessed by another system. action: The pool can be imported using its name or numeric identifier and the '-f' flag. see: http://zfsonlinux.org/msg/ZFS-8000-EY config: local-zfs ONLINE nvme0n1 ONLINE

- 依照上面出現的訊息要輸入 -f 的以下語法才能讓 local-zfs 加回系統

zpool import -f local-zfs - 參考網址 -

修改 zpool 名稱的方式

- 原本的 zpool name 為 ssd-zfs 想要改成 ssd-zpool

zpool export ssd-zfs zpool import ssd-zfs ssd-zpool

移除 zpool 的方式

- 原本的 zpool name 為 ssd2-zfs , 因 Fragmentation > 20% 想要移除後再重建, 先將所有在 ssd2-zfs 的檔案移到其他的 zpool, 再進行以下刪除 zpool 語法

zpool destroy ssd2-zfs

安裝一顆存在相同 zpool 名稱的 zfs 硬碟處理方式

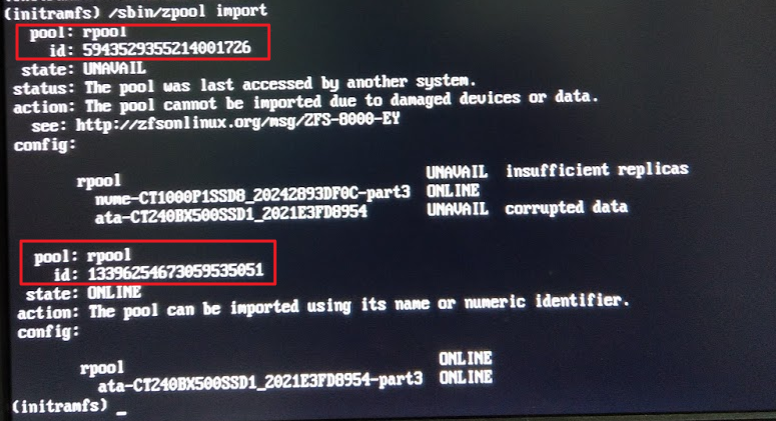

- 最簡單的方式是先在外面 fdisk 清空好硬碟再安裝, 萬一主機硬碟安裝程序複雜, 已經安裝開機才發現以下錯誤訊息 Exp. rpool

Message: cannot import 'rpool' : more than one matching pool import by numeric ID instead Error: 1

就可以使用 zpool import 指令來處理

- 判別原有真正要的 rpool 的 id 進行匯入 Exp. 13396254673059535051 , 語法如下:

/sbin/zpool import -N 13396254673059535051 - 如果沒有出現其他訊息, 就表示匯入成功, 這時輸入 Exit 就可以進入正常繼續開機執行程序

- 進入開機成功狀態後, 要儘快透過 fdisk 將這顆新加入有重複的 zpool 名稱硬碟進行處理

- 否則下次開機還是要處理一次這樣的程序.

zpool 出現降級(Degraded)時更換 zfs 硬碟處理方式

- Exp. pbs-zpool

zpool status pbs-zpoolpool: pbs-zpool state: DEGRADED status: One or more devices is currently being resilvered. The pool will continue to function, possibly in a degraded state. action: Wait for the resilver to complete. scan: resilver in progress since Thu Oct 29 15:43:26 2020 355G scanned at 49.8M/s, 96.9G issued at 13.6M/s, 1.55T total 97.1G resilvered, 6.09% done, 1 days 07:14:16 to go config: NAME STATE READ WRITE CKSUM pbs-zpool DEGRADED 0 0 0 sdb1 DEGRADED 11 52 0 too many errors errors: No known data errors

- 假設新安裝的硬碟為 sdf , 需要 fdisk 先將 sdf 建立一個 ZfS partition

- 將新建立的 sdf1 加入 pbs-zpool

zpool attach pbs-zpool sdb1 sdf1如果這指令執行幾秒沒有出現錯誤訊息, 就可以透過

zpool status pbs-zpool來查看修復與同步狀況

pool: pbs-zpool state: DEGRADED status: One or more devices is currently being resilvered. The pool will continue to function, possibly in a degraded state. action: Wait for the resilver to complete. scan: resilver in progress since Thu Oct 29 15:43:26 2020 355G scanned at 46.4M/s, 101G issued at 13.2M/s, 1.55T total 102G resilvered, 6.34% done, 1 days 08:09:31 to go config: NAME STATE READ WRITE CKSUM pbs-zpool DEGRADED 0 0 0 mirror-0 DEGRADED 0 0 0 sdb1 DEGRADED 11 52 0 too many errors sdf1 ONLINE 0 0 0 (resilvering) errors: No known data errors

- 等 sdf1 加入 pbs-zpool 的 resilvering 完成後, 就可移除原本異常的 sdb1

zpool clear pbs-zpool zpool detach pbs-zpool sdb1 zpool status pbs-zpool

應該可看到如下的訊息

pool: pbs-zpool state: ONLINE scan: resilvered 1.35T in 1 days 04:24:31 with 0 errors on Mon Nov 2 10:25:43 2020 config: NAME STATE READ WRITE CKSUM pbs-zpool ONLINE 0 0 0 sdf1 ONLINE 0 0 0 errors: No known data errors

底層硬碟擴大後 zpool 擴大空間方式

- 使用情境 -

- 更換硬碟 Exp. 原本 4TB 更換 8TB → zpool 透過 attach 新硬碟(8TB) 與原本硬碟(4TB) 進行 mirror 後, detach 原本硬碟(4TB) 後雖然 zpool 的硬碟已經是 8TB, 但 zpool 還是只有原本 4TB

- 虛擬機底層 VDisk 調大空間後, zpool 仍然是看到原本空間大小

- 處理方式 Exp. pbs-zpool @ /dev/sdd1

zpool set autoexpand=on pbs-zpool parted /dev/sdd resizepart 1 [X.XGB]? quit zpool online -e pbs-zpool sdd1 df -h

實際處理訊息如下

root@TP-PVE-252:/# parted /dev/sdd GNU Parted 3.2 Using /dev/sdd Welcome to GNU Parted! Type 'help' to view a list of commands. (parted) resizepart Partition number? 1 End? [8002GB]? Error: Partition(s) 1 on /dev/sdd have been written, but we have been unable to inform the kernel of the change, probably because it/they are in use. As a result, the old partition(s) will remain in use. You should reboot now before making further changes. Ignore/Cancel? Ignore/Cancel? I (parted) quit Information: You may need to update /etc/fstab. root@TP-PVE-252:/# zpool online -e pbs-zpool sdd1 root@TP-PVE-252:/# df -h Filesystem Size Used Avail Use% Mounted on udev 3.9G 0 3.9G 0% /dev tmpfs 796M 28M 768M 4% /run : : tmpfs 796M 0 796M 0% /run/user/0 pbs-zpool 7.1T 1.4T 5.7T 20% /pbs-zpool root@TP-PVE-252:/#

增加一顆 SSD 當 zfs 的 cache

- 假設使用六顆 SAS 1TB 硬碟組成 ZFS - raidz2 , 加上一顆 SSD 400GB (/dev/nvme0n1) 當 Cache

root@pve-1:~# zpool status pool: rpool state: ONLINE scan: scrub repaired 0B in 0 days 00:00:10 with 0 errors on Fri Dec 3 17:24:52 2066 config: NAME STATE READ WRITE CKSUM rpool ONLINE 0 0 0 raidz2-0 ONLINE 0 0 0 scsi-35000c50099b187df-part3 ONLINE 0 0 0 scsi-35000c50095c609f7-part3 ONLINE 0 0 0 scsi-35000c50099b18c6b-part3 ONLINE 0 0 0 scsi-35000c50099b185e3-part3 ONLINE 0 0 0 scsi-35000c50099b18453-part3 ONLINE 0 0 0 scsi-35000c50099b18ebb-part3 ONLINE 0 0 0

- 加入 nvme0n1 當 rpool 的 cache 語法

zpool add rpool cache nvme0n1

root@pve1:~# zpool status pool: rpool state: ONLINE scan: none requested config: NAME STATE READ WRITE CKSUM rpool ONLINE 0 0 0 raidz2-0 ONLINE 0 0 0 scsi-35000c50099b187df-part3 ONLINE 0 0 0 scsi-35000c50095c609f7-part3 ONLINE 0 0 0 scsi-35000c50099b18c6b-part3 ONLINE 0 0 0 scsi-35000c50099b185e3-part3 ONLINE 0 0 0 scsi-35000c50099b18453-part3 ONLINE 0 0 0 scsi-35000c50099b18ebb-part3 ONLINE 0 0 0 cache nvme0n1 ONLINE 0 0 0

- 查看 zpool 使用狀況

zpool iostat -v

root@pve1:~# zpool iostat -v capacity operations bandwidth pool alloc free read write read write -------------------------------- ----- ----- ----- ----- ----- ----- rpool 180G 5.28T 0 243 2.62K 5.17M raidz2 180G 5.28T 0 243 2.62K 5.17M scsi-35000c50099b187df-part3 - - 0 39 447 860K scsi-35000c50095c609f7-part3 - - 0 40 352 889K scsi-35000c50099b18c6b-part3 - - 0 42 536 905K scsi-35000c50099b185e3-part3 - - 0 40 456 879K scsi-35000c50099b18453-part3 - - 0 40 360 882K scsi-35000c50099b18ebb-part3 - - 0 40 530 878K cache - - - - - - nvme0n1 64.9G 308G 0 15 2 1.56M -------------------------------- ----- ----- ----- ----- ----- -----

移除 ZFS cache 硬碟方式

- 要移除 zfs2TB 內的 cache - sdc 的語法

zpool remove zfs2TB sdc - 執行前

# zpool status zfs2TB pool: zfs2TB state: ONLINE scan: scrub repaired 0B in 0 days 00:55:00 with 0 errors on Sun Dec 13 01:19:07 2020 config: NAME STATE READ WRITE CKSUM zfs2TB ONLINE 0 0 0 ata-WDC_WD2002FAEX-007BA0_WD-WMAY03424496 ONLINE 0 0 0 cache sdc ONLINE 0 0 0

- 執行後

# zpool status zfs2TB pool: zfs2TB state: ONLINE scan: scrub repaired 0B in 0 days 00:55:00 with 0 errors on Sun Dec 13 01:19:07 2020 config: NAME STATE READ WRITE CKSUM zfs2TB ONLINE 0 0 0 ata-WDC_WD2002FAEX-007BA0_WD-WMAY03424496 ONLINE 0 0 0

對 zpool 加上 Metadata 的 Special Device 加速讀取效能的方式

- 參考

- Metadata 是指 ZFS 儲存檔案系統資訊的資料, 由於讀取和寫入頻率較高,因此對系統效能有較大的影響。所以在一般 HDD 的 ZFS 加上 SSD 當 Special Device 就可以提高整體存取效能。不過需要注意的是 Special Device 如果損壞, 整個 zpool 就會毀損, 因此會將 special device 用兩個實體的 SSD 進行 mirror 保護來確保安全。另外 spool 設上 special device 是無法復原回沒有設定的狀態, 因此設定前要審慎。

- 語法 : zpool add <pool> special mirror <device1> <device2>

- Exp. 對 pbs-zpool 加上 /dev/nvme0n1 與 /dev/nvme1n1 當 special device

zpool add pbs-zpool special mirror /dev/nvme0n1 /dev/nvme1n1加入之後

root@h470:~# zpool status pbs-zpool pool: pbs-zpool state: ONLINE scan: scrub repaired 0B in 02:55:43 with 0 errors on Sun Nov 12 03:19:52 2023 config: NAME STATE READ WRITE CKSUM pbs-zpool ONLINE 0 0 0 sda1 ONLINE 0 0 0 special nvme0n1 ONLINE 0 0 0 nvme1n1 ONLINE 0 0 0

- 想要瞭解 IO 狀態, 可透過以下語法觀察

watch zpool iostat -v pbs-zpool